The later part of the game features a classic labyrinth puzzle. This post is a step by step walkthrough how I used Midjourney and Stable diffusion to achieve the location and the compromises we needed to make along the way.

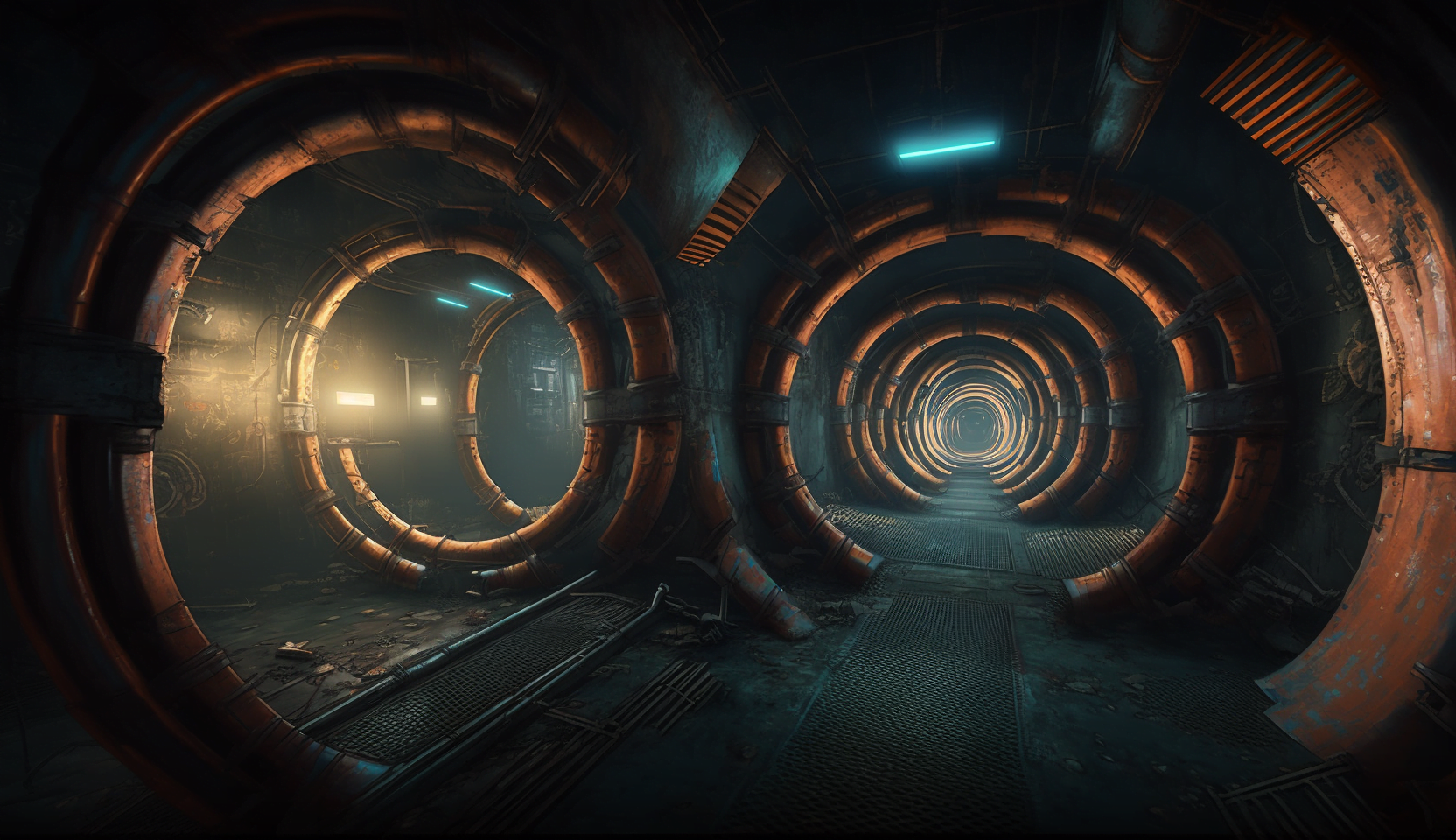

As we were working on the script, we came up with a scene in the game where you need to traverse a sewer network to try and find a specific location. This required us to create multiple images that felt they were from a single location with branching tunnels. As usual, we had a general idea of what we wanted and then set on our way to see if we could Make it happen using AI.

The gameplay idea of the labyrinth was to create a mechanic that randomly loads a new room when you enter any door in the maze, making it a different experience every time. In each room, you will have a way of figuring out what door is the correct door, and once you simply choose the correct door a set number of times, you get trough the labyrinth.

The Prompting

Midjourney

I started with Midjourney to see what sort of sewer locations it would give me. After working on the prompt for some hours it became apparent that the output was pretty limited.

The first test was just to see what a simple corridor with pipes would produce. This was not what I was looking for. It did not look like a sewer at all. It was more like a city street.

Top down views were not great either. These scenes hardly looked like a labyrinth with branching passages.

I decided to use a reference image for more control over the end result.

I simply ran a google image search and ended up using this image as a simple reference image.

This started to produce results that were more along the lines that I wanted to get. But I was only able to get straight tunnels. I wanted branching paths so that the player would have to decide which way to go in each screen.

I generated a ton of images with Midjourney. This selection shown here is only the ones that I picked from the much larger batch of generations. These looked great, but lacked the features of branching pathways I was after.

Scenario

I decided to try stable diffusion for the scene generation next. I ended up choosing the online tool called Scenario. I have been testing these online tools that allow you to train a custom stable diffusion model from time to time.

I started by training a custom model based on the images generated by Midjourney.

This was pretty simple. In Scenario you just click on Create Generator and give it a name and upload some images. If the images are not square, you are provided with a tool for cropping them. I simply cropped everything to the center without much though.

Now that we have a model trained on the sewer images we created earlier, we can get to work on seeing if those branching tunnels are finally possible!

Again, to improve my changes and to unify the style more, I used a source image ti go along with the prompt.

The prompt for the final sewer images ended up being “sewer tunnels cross-roads junction pathway crossing brick walls pipes high-quality masterpiece HDR multiple ways”. I had the sampling steps set all the way up to 150.

The images were not as contrasty or cinematic as the source generation in Midjourney, but this has a lot to do with the source image I chose to go with. I like the low contrast, moody look that these images have. I am planning to add a lighting pass on the backgrounds to emphasise the darkness of the scenes and have a moving light with the player.

But the mages had the main feature I was looking for: branching tunnels! every single generation had 2 or more exits in them, which was perfect for our use-case.

I believe that the branching is happening because in all of my square training images there is a tunnel leading into the depth. And when Stable Diffusion uses this square training data to fill a wide image, it ends up drawing multiple tunnels as it tiles the training data on the wider image area. This works for me!

The image resolution for the images generated in Scenario was only 912×512, but I used a free tool called Upscayl to bump them up to 3648 x 2048. This is already plenty for in-game use!

These images are a lot more abstract and lot more AI looking than the Midjourney generations, but I believe that they will work absolutely great for this use. The style is very consistent between each image and I can generate an endless amount of them with very high success rate.

However it still remains to be seen how these Stable Diffusion generated images mesh in with the Midjourney material.

Overpainting

It was now time to create the layers required for the lighting effects. The reflection masks, the shadows and the light pass.

As I was overpainting these images, watching them in full scale in Photoshop, I could not shake the feeling that they were not as detailed as I would have liked. I compared them to my previous locations and they were just as “wonky”, but these new ones created with my custom model lacked that final layer of detail that I think is required.

Back in Midjourney

I decided to see what would happen if I took one of my edited Scenario outputs and imported that as a source to Midjourney.

To my surprise, Midjourney was now able to get over itself and give me branching sewers. It just needed the nudge from Scenario. I am not sure why a photo of a branching tunnel did not give me branching tunnels, but an image generated from data that Midjourney produced gave me the tunnels. There is really no telling why these things work the way they do sometimes.

First, I kept getting very similar images, but once I stumbled upon one that was more interesting, I copied the seed, 2692671247, from it and added it to a remix of the prompt.

I think that still, the overall compositions of the image were not as interesting and varied as the ones that Scenario gave me. Scenario also had a lot more traditional sewer feel to the images. But the overall finish of the images from midjourney was much more in line with the other locations of the game, which were naturally generated with Midjourney as well. I believe that this is a solid compromise I can live with. After all, this is not a very important location, it is just a simple puzzle that requires a futuristic sewer/tunnel with branching pathways.

This was not as simple of a location to generate as the previous ones have been. It is crazy how with generative AI the perspective of what is a long process and what is not shifts. Usually a location takes around a hour to generate with AI, often times less. But I spend 6 hours on prompting alone on this location. With traditional modeling methods a location like this would take days to generate, maybe weeks!

AI vs 3D modeling workflow

I decided to write down some thoughts on AI vs more traditional methods of image generation.

Pros

AI

Fast

Cheap

Minimal training required (days)

Can be used by pretty much anyone

3D

High quality

Completely controllable

Reusable

Editable to a massively high degree

Modular

No compromises

Cons

AI

Random

Difficult to edit

No reuse of parts of the generation

Imprecise

Bound to limitations of training data

Requires compromise

3D

Very slow (when compared to AI)

Expensive

Years of training required

Requires one or more specialists in the team

The limitations of AI were absolutely on display in the creation of this location. With traditional methods, it would have been easy to create a procedural toolset to create branching sewer pipes. With a procedural sewer tool we could have generated any combination of pathways and cross-roads we desired very very fast, after the initial set up time (what could have been weeks). Naturally you also require an experienced 3D artist with knowledge of procedural workflows as a part of the team (this we have).

https://www.artstation.com/artwork/Krndbx A description of the pipe tool Neon Giant created for The Ascent.

We could have also kept in our initial puzzle core of having to use items on the pipes on the walls to see which way the water was flowing. But with Ai, these pipes simply never materialised. Our vision was not possible with AI tools. This required us to rethink the puzzle mechanic of this location.

But because of time and budget limitations (there is no time or budget) we need to conform to the quirks of the AI. When working with AI we must be prepared to change our story, our characters our gameplay ideas based on the limits we hit when generating these assets.

In the next part of the labyrinth we will create the 3D models and gameplay functionality for a random maze in Unity.

Leave a Reply